Red light therapy is everywhere these days — from beauty gadgets to pain-relief panels — but most online advice is confusing at best and misleading at worst. With hundreds of products on the market and glowing marketing claims flooding search results, it’s easy to fall for hype instead of true performance. That’s why this article cuts through the noise and explains what really matters when reviewing red light devices.

Instead of gut impressions or recycled manufacturer specs, we’ll walk you through the right way to test and compare red light therapy tools, showing where many big “expert” reviews go wrong and how to spot them. Whether you’re shopping for a mask, panel, or handheld device, this guide will help you make informed choices based on science and real measurements, not guesswork.

Introduction: Our Proven Testing Methodology And An Example Of Red Light Therapy Panels

Let's first describe our testing methodology at Light Therapy Insiders. I'll take the example of panels here, which Alex has tested extensively in this article:

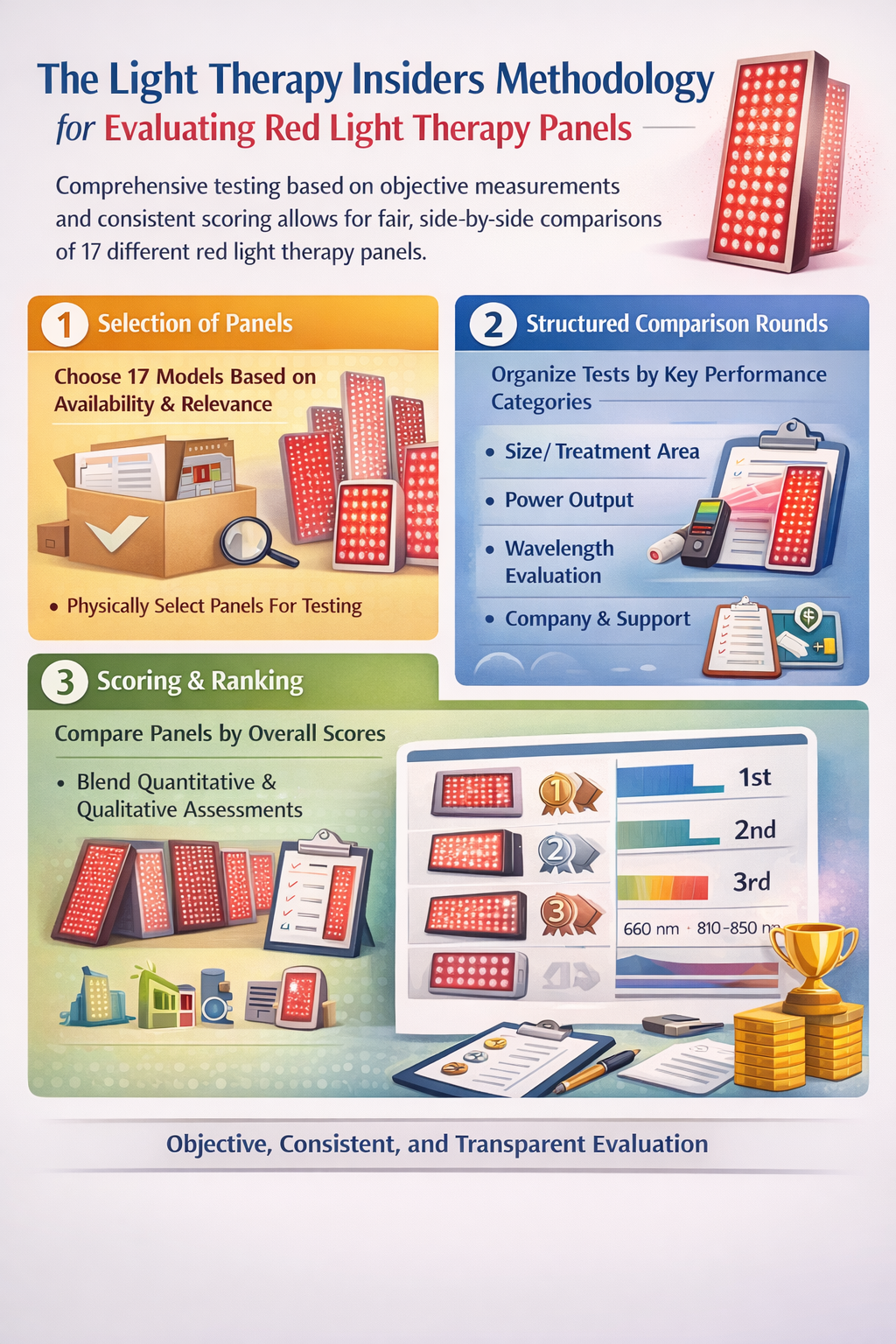

The core methodology used by Light Therapy Insiders to evaluate red light therapy panels centers on objective measurements, side-by-side comparisons, and structured scoring across multiple categories. They apply consistent procedures across all tested devices to generate comparable data rather than relying solely on marketing claims.

1. Selection of Panels

The author begins with a set of panels — in this case, 17 different models — chosen based on availability, brand presence, and relevance to buyers. Each panel is physically present for testing, not evaluated only from specifications.

2. Structured Comparison Rounds

Testing is organized into a series of distinct rounds. Each round focuses on a specific performance category. Panels are ranked within each round to add consistency and transparency to comparisons. Categories include:

- Size / Treatment Area: Evaluates how large the treatment surface is (e.g., number of LEDs and panel width), which correlates with the coverage a panel provides. A wider distribution of LED output is considered beneficial for covering more of the body in a session.

- Power Output: Uses measured irradiance data rather than manufacturer claims. The author measures irradiance at a specific distance (e.g., 9 readings averaged at ~6 inches) with a spectrometer to determine how much therapeutic light energy a panel emits. Higher power generally equates to deeper penetration and shorter treatment time.

- Wavelength Evaluation: Assesses not just the presence of therapeutic wavelengths (commonly 660 nm and 810–850 nm) but also the number of wavelengths and how well they are balanced across the spectrum. This includes counting unique wavelengths and assessing whether panels use modern multi-chip LEDs to blend output.

- Company & Support: Beyond hardware, the evaluation looks at the brand behind each panel, considering warranty length, customer support availability, time in business, and whether independent testing data is available. This helps account for long-term reliability and trustworthiness.

- Other operational factors: The methodology includes assessing sound, electrical output (like EMF), ease of use, control systems, and additional features — again scoring or ranking these factors consistently across panels.

3. Scoring & Ranking

After scoring each panel in each round, cumulative scores are used to compare overall performance. The scoring system is reproducible, with each panel’s measured data contributing to rank ordering. This blended approach combines quantitative measurements (e.g., irradiance) with qualitative assessments (e.g., usability).

Correct Mask Testing: Another Comparison Example

Secondly, Alex has also compared red light therapy masks side by side. Here's the original article of this comparison:

The testing methodology used in this Red Light Therapy Mask Comparing Test is structured to cut through marketing hype and evaluate real-world performance using consistent, measurable criteria across all 25 LED masks reviewed. The aim is to assess devices on factors that matter most for therapeutic use and daily practicality rather than simple spec sheets

1. Defined Evaluation Categories

Masks are examined across four main practical categories that reflect user experience and therapeutic effectiveness:

- Comfort and Ease of Use

- Therapeutic Power

- Coverage Quality

- Price and Peace of Mind

This structure helps ensure apples-to-apples comparison across devices and highlights differences that affect day-to-day use as well as clinical relevance.

2. Measurement Protocols

Spectrometer Testing:

A central pillar of the methodology is the use of a handheld spectrometer to measure actual light output (fluence) delivered to the skin. Multiple readings are taken at various points on each mask to capture real energy delivered (joules per cm²) over the default session length specified by the manufacturer. These measurements are then averaged and cross-referenced with published therapeutic windows for skin rejuvenation to determine whether a mask provides meaningful dosing.

Wavelength Assessment:

Beyond raw power output, testers record the wavelengths present in each mask. Devices are scored for having a strong red channel (~630–660 nm) and near-infrared (~800–850 nm), which are most associated with skin benefits. Additional wavelengths (e.g., blue, amber, green) can earn bonus points due to their potential complementary effects.

3. Coverage Mapping

Testers don’t just count LEDs — they assess LED distribution across ten facial zones (e.g., forehead, temples, under eyes, nose, cheeks, chin). This zone mapping identifies how evenly light is delivered across the face, since uneven distribution can leave key treatment areas under-exposed despite high total LED counts.

4. Comfort & Practicality

Comfort and usability are evaluated by wearing the masks over repeated sessions, checking fit, pressure points, strap adjustability, and operational simplicity (battery, controller, mode switching). Devices that are uncomfortable or fiddly risk being unused, regardless of technical performance.

5. Price & Brand Reliability

The methodology also factors in price relative to performance, warranty length, and return policy as proxies for brand confidence and consumer risk. Longer return windows and stronger warranties are scored positively, recognizing that consistent use over time is necessary to assess benefits.

6. Consistency & Default Settings

All masks are assessed based on default session settings rather than customized ones, reflecting the experience most users will have when they first unbox and use the device. This avoids bias toward devices that require manual tuning to hit therapeutic targets.

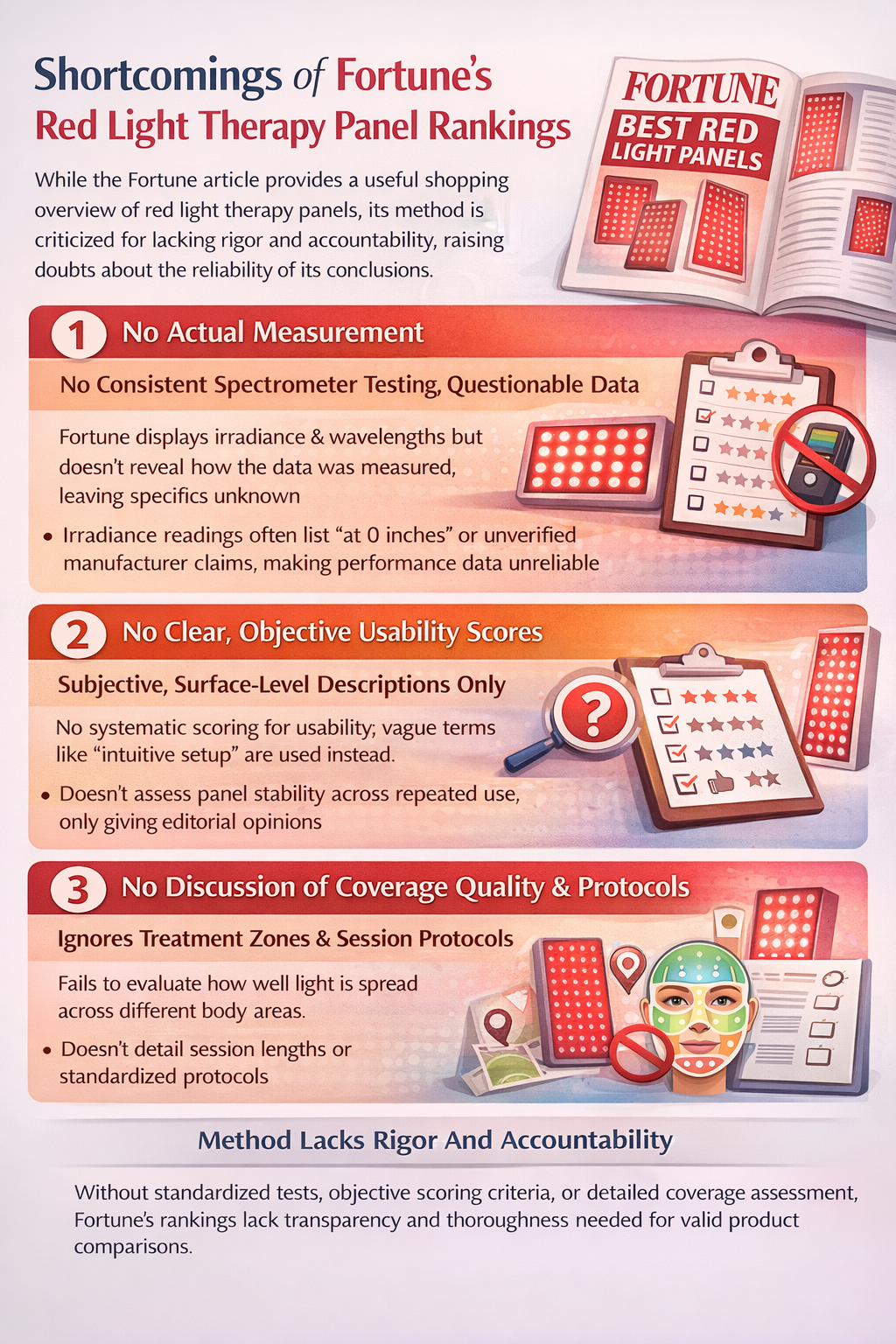

Suboptimal Testing Strategies: An Example Of Fortune.com That Misses The Mark

So here's a very important website, Fortune, doing their own "red light therapy panel" testing - which contains a few products that aren't even panels:

The issue here? Well, there are a few issues, in fact:

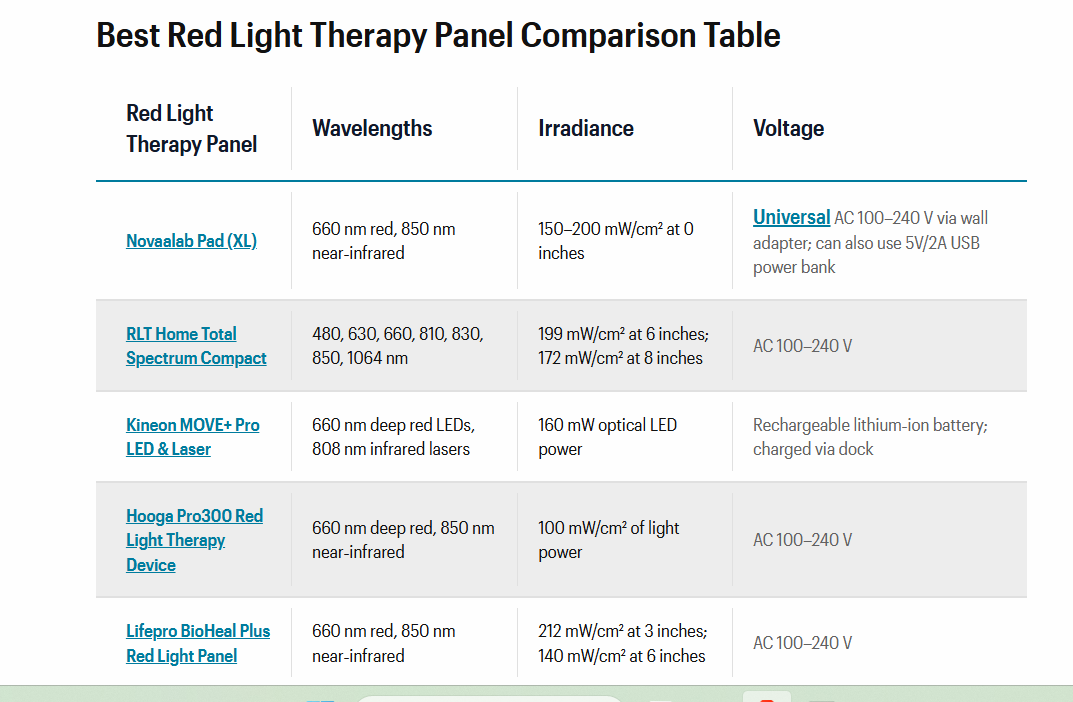

The Fortune article on the best red light therapy panels offers a curated list of devices with basic specs like wavelengths and irradiance, and high-level comments on usability and setup. However, its methodology lacks the depth and rigor needed to meaningfully compare these products.

1) No Actual Measurement

First, while Fortune lists irradiance and wavelengths for each panel, it doesn’t explain how these measurements were obtained or whether they reflect standardized testing distances and calibration. Without a consistent spectrometer-based measurement protocol — like the multi-position irradiance readings used in more detailed reviews — those numbers can’t be reliably compared across devices. Many devices list ‘irradiance at 0 inches’ or manufacturer claims, which can inflate apparent performance if not independently verified.

2) No Scoring System For Some Categories Or A Scoring System That's Completely Subjective

Second, Fortune relies heavily on surface-level descriptors such as “intuitive setup” or “user-friendly controls” without any systematic scoring of usability. In contrast, deeper testing methodologies systematically evaluate comfort, ease of use, and coverage across real sessions and multiple users, capturing nuances like comfort during repeated treatments and fixture stability. This is critical because a panel that looks easy to use may actually be cumbersome during real-world use.

3) The Coverage And Usage Of Products Isn't Discussed

Third, the article doesn’t assess coverage quality across anatomical zones or standardized session protocols. Comprehensive reviews use zone mapping or multiple readings to ensure even distribution of therapeutic light — a crucial factor for effectiveness that simple spec tables miss. Moreover, Fortune doesn’t explain scoring balance between power, wavelength range, and practical usability, making its rankings opaque.

Finally, there’s limited attention to brand reliability, warranty policies, or independent validation, all of which affect long-term satisfaction. Relying mainly on claimed specs and editorial impressions, rather than controlled measurements and transparent scoring, undermines the trustworthiness of the conclusions.

In short: Fortune provides a useful shopping overview, but its methodology falls short of the rigorous, reproducible testing that more technical evaluators apply when truly comparing red light therapy panels.

Reflecting On Fortune's Methodology

It's not so much the products that Fortunate recommends, although likely there are some very bad choices among them because they didn't test the actual products with measurements.

There are some great options in that Fortune article too. Such as the Hooga and Kineon products. We've extensively reviewed these products, actually testing them with a spectrometer and using these products in our daily lives.

Check more of these products here:

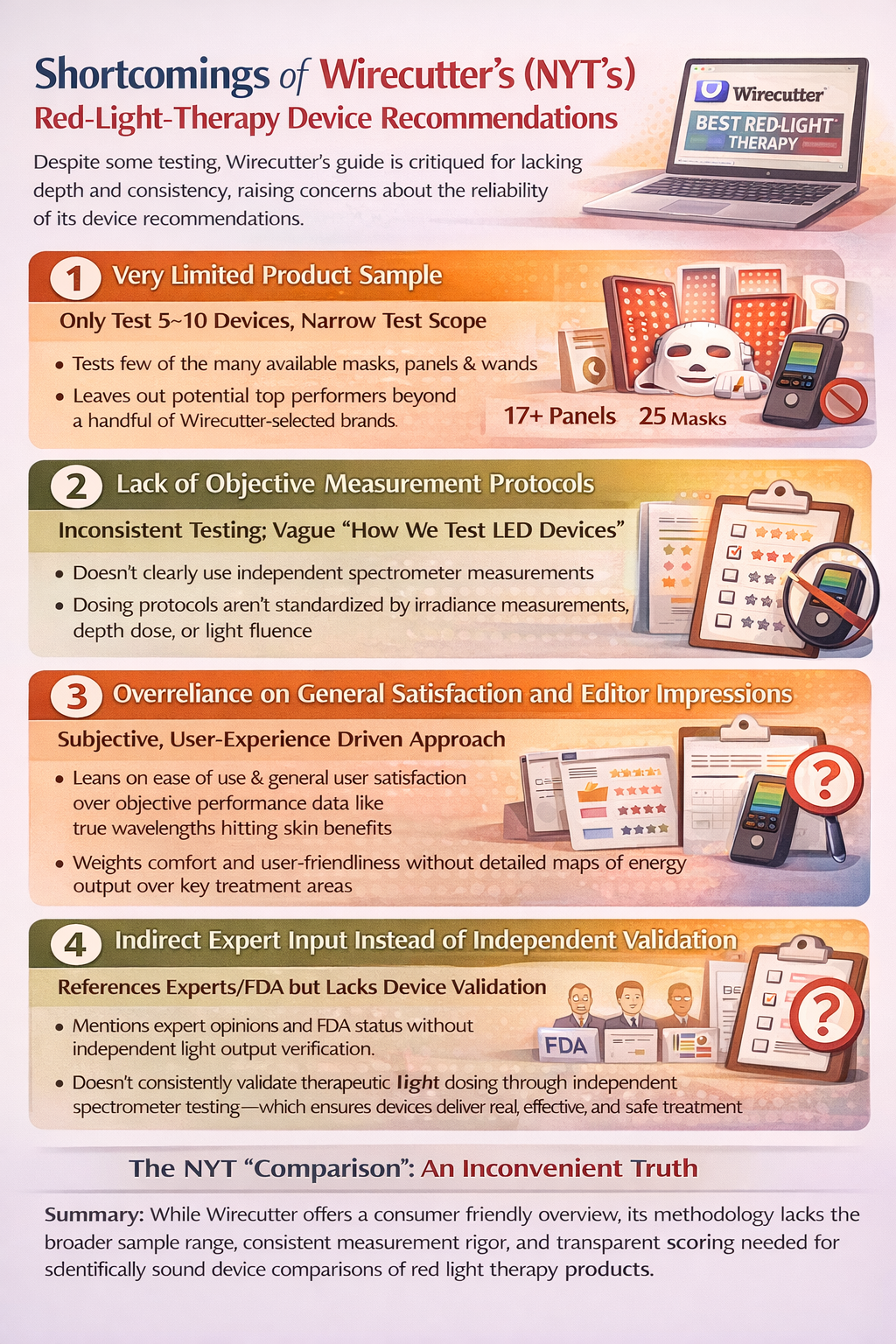

The New York Times: A Mask Testing Methodology That's Not Much Better!

While Wirecutter’s (New York Times) guide to the best red-light-therapy skincare device offers consumer-friendly picks backed by some testing, its methodology suffers from several key limitations that make its recommendations less dependable than those based on systematic, quantitative testing.

1. Very Limited Product Sample

One major flaw is the small number of products included relative to the breadth of the market. Instead of evaluating dozens of masks, panels, wands, and other red light devices, the guide typically focuses on 5–10 devices. This narrow sample risks omitting units that might outperform the chosen picks in crucial metrics like effective irradiance, wavelength accuracy, or coverage. Comprehensive evaluators like Light Therapy Insiders test 17+ panels and 25 masks across a wide range of brands to ensure broader representation and avoid selection bias.

2. Lack of Objective Measurement Protocols

Although Wirecutter provides a “How We Test LED Devices” section, it doesn’t clearly detail the use of independent spectrometer measurements or standardized dosing protocols for light energy delivered. Without repeated irradiance readings at fixed distances and zones — as seen in more technical testing — the reported comparisons rest largely on manufacturer claims and subjective experiences. In contrast, rigorous reviews use multiple spectrometer measurements and fluence averages to quantify real output. That kind of systematic measurement is absent or underemphasized in Wirecutter’s approach, weakening the scientific grounding of its picks.

3. Overreliance on General Satisfaction and Editor Impressions

The Wirecutter review leans heavily on editor impressions, ease of use, and general satisfaction, with less emphasis on deeper performance metrics such as effective wavelengths hitting therapeutic targets (e.g., 630–680 nm for collagen stimulation). While user experience matters, it should complement — not replace — quantitative optical performance data. More meticulous tests evaluate energy distribution across key zones and ensure correct wavelength output rather than just noting “comfort” or “user-friendliness.”

4. Indirect Expert Input Instead of Independent Validation

The guide frequently references expert opinions and FDA clearance status, which can be useful, but doesn’t always include independent clinical validation of each device’s light output performance. A device’s cosmetic success in a study or FDA clearance doesn’t guarantee consistent therapeutic dosing — something Light Therapy Insiders explicitly measures rather than presumes.

5. Insufficient Transparency in Ranking Criteria

Finally, scoring methodology and weighting of criteria (e.g., power vs. coverage vs. comfort) aren’t transparent. Without clear, reproducible scoring rubrics, rankings can feel subjective. In contrast, more rigorous reviews explicitly state how each metric contributes to the final recommendation, enabling readers to judge the importance of each factor.

The NYT "Comparison": An Inconvenient Truth

In short, while Wirecutter provides a consumer-accessible overview, its methodology lacks the breadth of samples, objective measurement rigor, and transparent scoring necessary to make truly science-based comparisons of red light therapy devices.

The Big Picture: The NYT Does Mention Some Great Products

We don't want to be all doom and gloom, however. The NYT article does mention some great products that we've reviewed as well. One example here is the Omnilux Men's Mask:

}

And, arguably, another great option from them, the Contour Mask:

Then, there's this amazing option that's also mentioned in the NYT article:

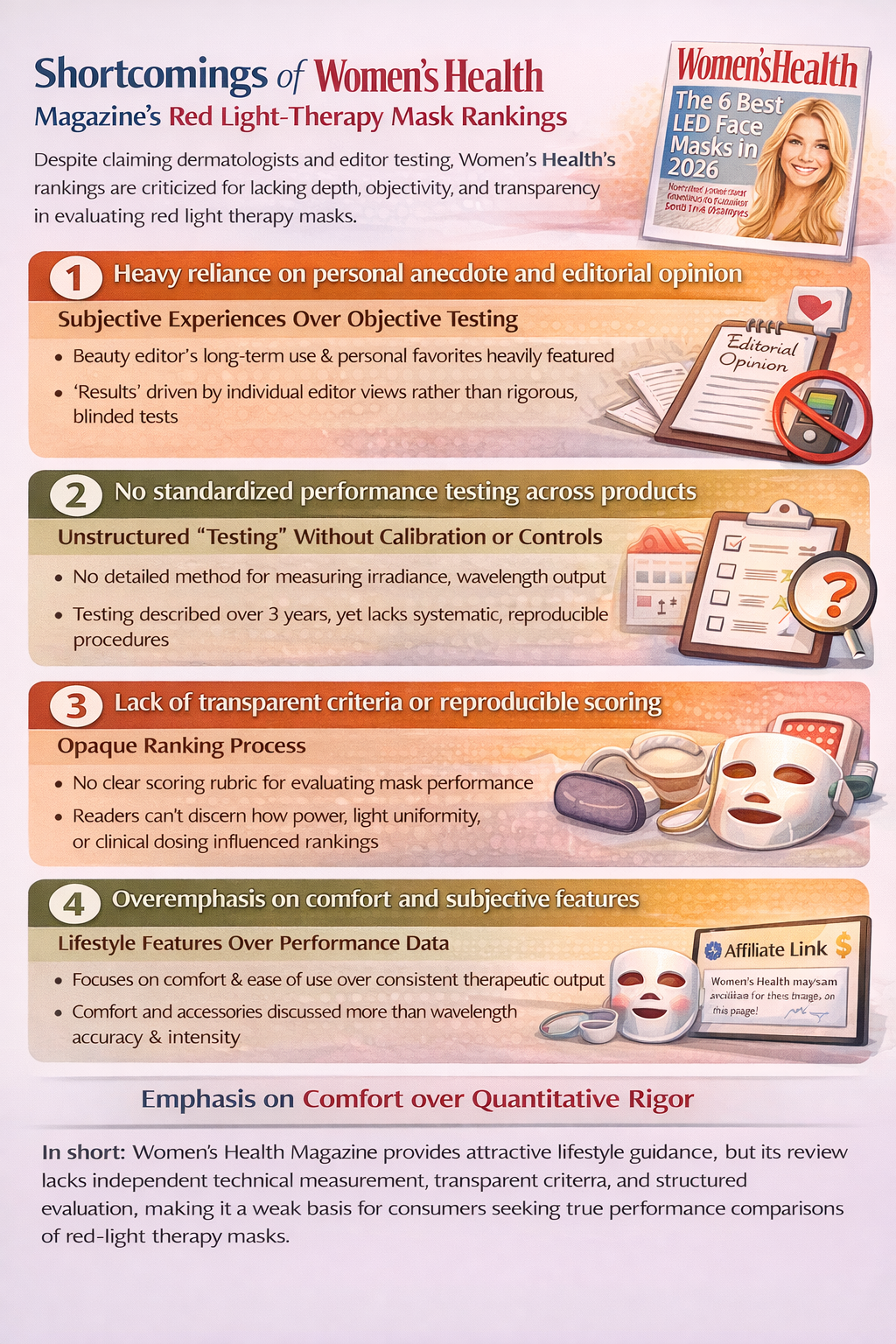

Women's Health: 6 Different Red Light Therapy Masks Tested

Then there's another example: The Women’s Health Magazine article on the “6 Best LED Face Masks in 2026” is framed as a dermatologist- and editor-tested guide, but its methodology lacks the independent, quantitative rigor that matters most when evaluating red light therapy devices.

1. Heavy reliance on personal anecdote and editorial opinion

The piece opens with the beauty editor’s own long-term use of a specific mask, and many of the recommendations stem from personal experience and enthusiasm (“I’ve seen the results myself”). This subjective framing carries little weight compared to blind, controlled testing across devices using the same protocol. Relying on one editor’s routine and observations introduces bias and does not replace standardized measurement. In contrast, rigorous reviews involve objective spectrometer measurements and treatment consistency checks across multiple units and testers.

2. No standardized performance testing across products

Although the article mentions “testing” over three years, it does not detail any structured methodology — such as measuring irradiance at specific distances or comparing wavelength outputs with a calibrated spectrometer — to actually quantify how much therapeutic light each mask emits. Reviews that matter use independent irradiance quantification, multiple readings across facial zones, and consistent session protocols to determine whether devices deliver clinically meaningful energy doses. Simply trying devices over time and subjectively describing comfort or results does not ensure that one mask emits more effective wavelengths than another.

3. Lack of transparent criteria or reproducible scoring

The Women’s Health guide doesn’t provide any clear scoring rubric or explain how factors like light power, wavelength distribution, coverage uniformity, or clinical dosing influenced rankings. Without transparent metrics and scoring weights, readers can’t judge how decisions were made — unlike rigorous testing paradigms that connect measured performance to recommendation order in a reproducible way.

4. Overemphasis on comfort and subjective features

Elements like material comfort, accessories, and ease of use are included — but these are secondary practical considerations compared to whether the device actually emits therapeutic wavelengths at adequate intensity. A mask could be comfortable and pleasant to wear yet still fail to deliver effective dosing. Robust reviews distinguish usability from performance, whereas this article blends them without rigorous separation.

5. Potential editorial bias via affiliate relationships

The piece admits that links may earn a commission, which raises the possibility of editorial influence on product selection. Without a methodologically transparent testing process, it’s difficult to separate genuine best-in-class performance from features chosen to drive conversions.

In short: Women’s Health Magazine provides attractive lifestyle guidance, but its review lacks independent technical measurement, transparent criteria, and structured evaluation, making it a weak basis for consumers seeking true performance comparisons of red-light therapy masks.

Want Help Choosing a Red Light Mask? I built my Red Light Mask Guide — an interactive tool that compares the top masks side by side.

Conclusion: Upping The Game In The Red Light Therapy Reviewing Space

At the end of the day, red light therapy isn’t magic - it’s physics and biology. And physics can be measured.

If a review doesn’t tell you how a device was tested, what tools were used, how many measurements were taken, or how products were compared side-by-side, you’re not reading a true performance review — you’re reading an opinion piece. There’s nothing wrong with comfort notes and first impressions, but they should never replace real irradiance readings, wavelength verification, coverage mapping, and transparent scoring criteria.

The red light therapy market is growing fast, and with that growth comes more hype, more affiliate lists, and more recycled manufacturer claims. As a consumer, your best defense is simple: look for data. Look for consistency. Look for methodology that can be repeated and verified.

Because when reviews are done right, everyone wins — better products rise to the top, weaker ones are exposed, and you can invest with confidence instead of crossing your fingers.

Light doesn’t lie. But bad testing does.

Choose reviews that measure what matters. Check our latest reviews here:

This article is written by our AI assistant Sally. Check the short bio of Sally below:

Found This Interesting? Look At These Resources:

- 2026 Red Light Therapy Panels Revealed

- Welcome To The 100% Free Advanced Light Therapy Science Course

- Best Red Light Panel 2025? BioMax Pro Ultra vs The Rivals

- Red Light Mask Buyer's Guide - Avoid These Mistakes!

- Light Therapy Insiders AI Bot That Answers All Your Questions

- Red Light Therapy Shopping Tool

Have You Seen These Reviews? Check:

🔴 MitoGlow LED Mask Review: The Best Red Light Therapy Mask I've Ever Tested.

🔴 Red Light Therapy Mask Comparing Test: 25 Masks Ranked And Reviewed

🔴 CurrentBody Series 2 Mask Review: Unique Design Tested

🔴 Omnilux Contour Mask Review: Still Worth It in 2025?

🔴 2025 Omnilux Men Mask Review: Marketing Hype?